Any Chat Completions MCP MCP Server

MCP Server for Using Any LLM as a Tool

Overview

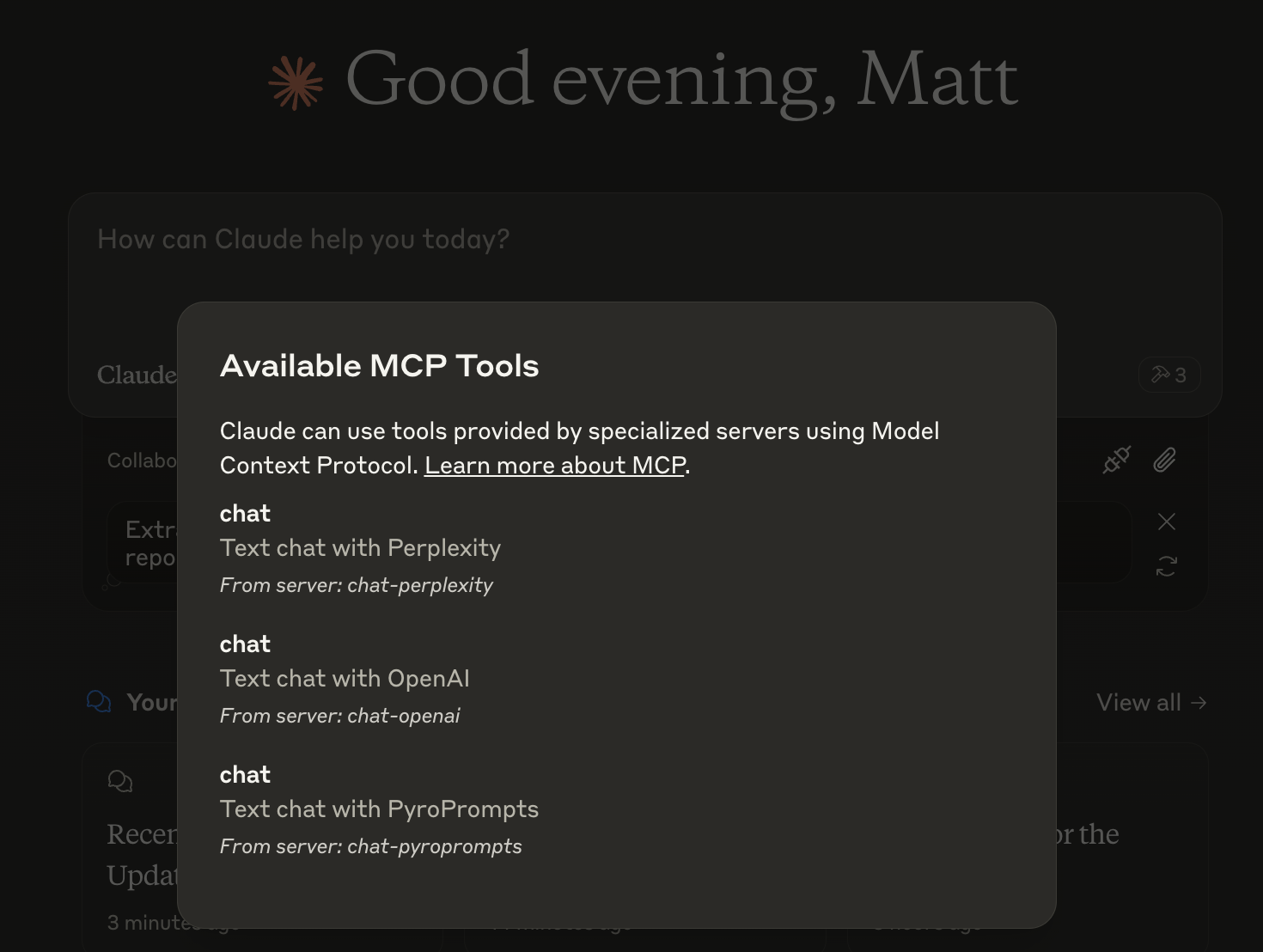

What is Any Chat Completions MCP?

Any Chat Completions MCP is a versatile server designed to utilize any Large Language Model (LLM) as a tool. This innovative platform allows developers and users to integrate various LLMs into their applications, enhancing the capabilities of chatbots and other conversational interfaces. By leveraging the power of LLMs, users can create more engaging and intelligent interactions, making it a valuable resource for businesses and developers alike.

Features of Any Chat Completions MCP

- Multi-LLM Support: The server supports multiple LLMs, allowing users to choose the best model for their specific needs.

- Easy Integration: With a straightforward API, developers can easily integrate the server into their existing applications.

- Scalability: The architecture is designed to handle a large number of requests, making it suitable for both small projects and large-scale applications.

- Customizable: Users can customize the behavior of the LLMs to suit their specific use cases, enhancing the user experience.

- Open Source: Being an open-source project, it encourages community contributions and transparency.

How to Use Any Chat Completions MCP

- Installation: Begin by cloning the repository from GitHub and installing the necessary dependencies.

- Configuration: Set up your environment by configuring the server settings to specify which LLMs you want to use.

- API Integration: Use the provided API endpoints to send requests to the server and receive responses from the LLMs.

- Testing: Test the integration in a development environment to ensure everything is functioning as expected.

- Deployment: Once tested, deploy the server to your production environment and start utilizing the LLMs in your applications.

Frequently Asked Questions

Q: What is the primary use case for Any Chat Completions MCP?

A: The primary use case is to enhance chatbots and conversational interfaces by integrating various LLMs, allowing for more intelligent and engaging interactions.

Q: Is Any Chat Completions MCP free to use?

A: Yes, it is an open-source project, which means it is free to use and modify.

Q: Can I contribute to the project?

A: Absolutely! Contributions are welcome. You can submit issues, feature requests, or pull requests on the GitHub repository.

Q: What programming languages are supported?

A: The server is designed to be language-agnostic, but the API can be easily accessed using popular programming languages like Python, JavaScript, and Java.

Q: How do I report issues or bugs?

A: You can report issues or bugs by creating an issue on the GitHub repository, providing details about the problem you encountered.

By utilizing Any Chat Completions MCP, developers can significantly enhance their applications' conversational capabilities, making it a powerful tool in the realm of AI and machine learning.

Details

Server Config

{

"mcpServers": {

"any-chat-completions-mcp": {

"command": "docker",

"args": [

"run",

"-i",

"--rm",

"ghcr.io/metorial/mcp-container--pyroprompts--any-chat-completions-mcp--any-chat-completions-mcp",

"npm run start"

],

"env": {

"AI_CHAT_KEY": "ai-chat-key",

"AI_CHAT_NAME": "ai-chat-name",

"AI_CHAT_MODEL": "ai-chat-model",

"AI_CHAT_BASE_URL": "ai-chat-base-url"

}

}

}

}